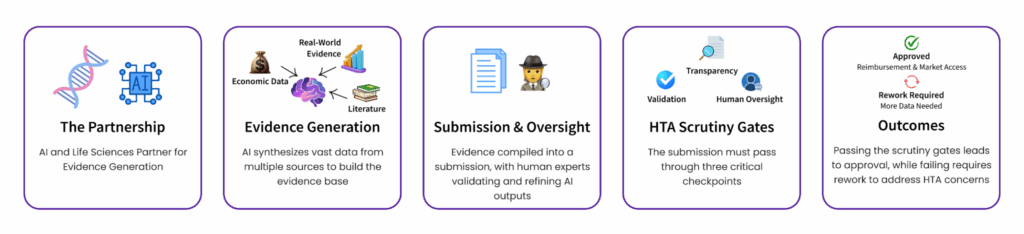

As AI rapidly reshapes the evidence landscape, health technology assessment (HTA) bodies are beginning to define clear expectations for its use in submissions. At CapeStart, we work closely with life sciences clients navigating these changes—especially those integrating GenAI into literature reviews, RWE generation, economic modeling, Joint Clinical Assessment (JCA) support, Clinical Evaluation Reports (CERs), Patient Reported Outcomes (PROs), and Global Value Dossiers (GVDs). Whether you’re preparing a GVD or submitting to agencies like NICE, understanding what HTA bodies expect from AI-generated content is critical. This guide offers an overview of how HTA agencies are considering AI for evidence generation.

HTA bodies are increasingly acknowledging the potential of AI—particularly generative AI—but emphasize rigorous standards around transparency, validation, and human oversight.

Understanding the Role of AI in Evidence Generation

To consider the question of how HTAs are evaluating AI in evidence generation, it’s helpful to consider the many ways AI can enhance evidence generation. Key examples include:

- Automated Literature Reviews: Natural language processing (NLP) can rapidly sift through vast scientific literature.

- Real-World Data Analysis: Machine learning models can detect patterns in electronic health records, claims databases, and registries.

- Predictive Modeling: AI can estimate outcomes for subpopulations or predict long-term effects where trial data is limited.

By synthesizing mass amounts of data and information, AI has the power to advance many critical functions of life science work. For these functions, there are often high-demand, PhD-level resources needed to conduct the basic workflow of the task, providing their vital professional judgment to ensure the exercise is thoughtful and rigorous. However, these high-demand resources are also mired in tedious document gathering, mass assessment, and onerous summarization, which could be measurably more efficient with the help of AI. For HTA agencies, the question isn’t whether AI is useful, it’s whether AI-derived evidence is trustworthy enough to inform reimbursement and policy decisions.

NICE Leadership: Clear Guidance with Necessary Safeguards

In August 2024, NICE published a position statement outlining how AI should be used in evidence generation and reporting. The guidance underscores several key principles:

Augmentation, not replacement of human expertise, critical decisions and oversight remain human-led.

Transparency is essential: Submissions must declare AI use, explain methods, and justify why AI added value over traditional methods.

Rigorous validation: Any AI used for treatment effect estimation must undergo sensitivity analyses, external checks, and triangulation with existing clinical evidence. Use trusted frameworks such as PALISADE, TRIPOD+AI, LIME, and SHAP to support explainability and ethical deployment.

Early engagement recommended: Organizations are encouraged to discuss AI approaches with NICE early in the development process.

Other HTAs: Watching, Waiting, Adopting NICE’s Principles

Agencies like CADTH (Canada), HAS (France), G‑BA/IQWiG (Germany), SMC (Scotland), and PBAC (Australia) have not issued formal AI policies—but researchers and industry reports indicate early consensus on prioritizing manual validation and dual-review processes for AI-assisted evidence.

Recommendation for buyers: Treat NICE’s guidance as a gold standard and proactively apply these principles across all jurisdictions.

Key Applications Under Scrutiny

Systematic Literature Review (SLR) —GenAI can help automate search, screening, data extraction, and even code generation for meta-analysis. However, HTAs expect:

- AI-driven screening with human oversight

- Clear rationale for each inclusion/exclusion decision

- Validation data and error rates

Real-World Evidence (RWE)—HTA bodies see potential in AI for structured extraction from unstructured sources. But they require:

- Proven data provenance

- Cross-validation with conventional data sources

- Supplementary—not primary—role for AI-generated outputs

Health Economic Modeling—AI holds promise for building, optimizing, and validating economic models. Still, regulators demand:

- Sensitivity analyses and scenario testing

- Pre-specified validations with held-out data

- Triangulation with clinical evidence for any AI-influenced outcomes

Best-Practice Vendor Checklist for HTA-Ready AI

| Requirement | Essential Vendor Capabilities |

| Transparency | AI-use declarations, explainability, rationale for each decision |

| Validation | Proven performance metrics, cross-validation, pilot data |

| Human Oversight | Clearly defined roles and review pathways |

| Regulatory Alignment | Adherence to frameworks PALISADE, TRIPOD+AI, Cochrane, GIN |

| Security & Ethics | Bias mitigation, data privacy, cybersecurity risk reduction |

| Stakeholder Engagement | Early consultation with NICE and future HTA bodies |

What’s Next?

As comfort and adoption with AI grows, expect increasing harmonization as global HTAs align around principles initiated by NICE and emerging EU-Wide policies. This will mean:

- Agencies will demand higher accountability around AI performance and transparency as digital evidence becomes mainstream.

- Vendors will need to invest in explainable AI toolkits, on-premise deployment, services, and domain expertise to support HTA-grade submission.

Final Takeaway

Generative AI has tremendous potential to accelerate evidence synthesis—but successful HTA submissions require a balanced approach. Transparency, validation, human oversight, and regulatory alignment are not optional—they’re essential. For buyers and vendors alike, aligning with NICE’s position and proactively following these guidelines is your best path to future-ready, AI-supported market access.