The pharmaceutical industry is currently at a pivotal moment in the AI landscape. After years of cautious AI experimentation limited to isolated labs and pilot programs, something fundamentally different is unfolding in 2026. The central question has shifted from “Does AI work?” to “How do we deploy it safely and at scale?” especially in organizations where one mistake can endanger lives, invite massive regulatory penalties, or jeopardize research valued in billions.

This shift isn’t isolated. As agentic AI systems transition from impressive demos into real production workflows (autonomously analyzing clinical trial data, orchestrating complex drug discovery pipelines, and interfacing with regulatory databases), the security architecture governing them has become absolutely mission-critical. That’s why our third prediction for 2026 feels so timely: by year-end, role-based tool isolation will stand as the gold standard for pharma AI deployments.

The Rise of Agentic AI and Role-Based Tool Isolation in Pharmaceutical Operations

Traditional pharma AI played a supportive role. It answered queries, predicted drug interactions, or spotted manufacturing anomalies, always with humans firmly in control and reviewing outputs before any action. But 2026 marks the turning point where agentic systems truly take the initiative.

These systems don’t merely respond. They plan, decide, and execute multi-step workflows autonomously. They call APIs, query databases, trigger processes, and even coordinate with other agents, all at machine speed. For example, a research agent can search patent databases, cross-reference molecular structures with internal libraries, propose synthesis pathways, and book lab equipment for validation, often before a scientist checks their morning email.

This leap from passive analysis to active execution dramatically alters the risk landscape. When an AI doesn’t just suggest adjusting a clinical trial protocol but actually updates the trial management system, notifies sites, and regenerates documentation, the consequences of error or compromise grow exponentially. A single misbehaving or hacked agent can now trigger widespread operational failures across highly regulated workflows.

Why Traditional Access Controls Fall Short without Role-Based Isolation

Most pharma organizations have tried to secure AI by extending familiar role-based access control (RBAC) frameworks built for human users. A scientist gets read-access to clinical databases. A manufacturing lead gets write privileges in production systems. The approach is straightforward, auditable, and battle-tested over decades.

However, AI agents behave nothing like humans navigating GUIs. They invoke tools rapidly, hundreds of API calls, code executions, or database queries per second. An agent launched under a broadly permissioned service account becomes a standing vulnerability. Those permissions rarely face review, seldom narrow over time, and often expose data far beyond what any individual human could access.

The problem intensifies with the non-deterministic character of modern AI. Unlike rigid traditional software, agentic systems reason, adapt, and handle novel situations in ways impossible to pre-map exhaustively. Static permissions simply can’t keep up.

Recent analyses underscore the urgency. Studies of over 30,000 AI agent extensions revealed vulnerabilities in more than 25% of cases. When these run with inherited broad permissions, one breach can compromise entire environments. In pharma, where the data spans patient PHI, proprietary compounds, and competitive intelligence, the stakes feel existential.

Role-Based Tool Isolation: A Fundamental Architectural Shift

Role-based tool isolation marks a profound change in how we secure AI agent permissions. Instead of assigning broad access at deployment time and crossing fingers, we enforce fine-grained controls precisely at the tool invocation layer, right when the agent tries to call an API, query data, or run code.

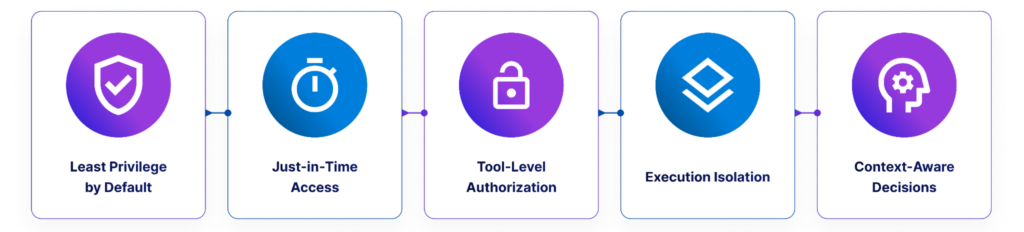

The approach draws directly from proven zero-trust principles for humans:

- Least privilege by default: Agents get only the minimal tools and data needed for their exact task.

- Just-in-time access: Permissions activate dynamically for specific actions and are revoked immediately afterward.

- Tool-level authorization: Every invocation triggers explicit checks before execution.

- Execution isolation: Risky operations occur in sandboxed environments with strict input validation.

- Context-aware decisions: Approvals factor in the requester (human or agent), intent, and full operational context.

The control layers behind secure agentic AI

The control layers behind secure agentic AI

In real terms, a clinical analysis agent assisting a research scientist inherits scoped permissions matching that scientist’s role, but strictly limited to relevant tools and datasets for the current task. When the agent attempts a database query, the system doesn’t merely check theoretical access. It verifies this exact query, for this purpose, by this user, at this moment.

Traditional vs. Role-Based Tool Isolation: A Comparison

| Dimension | Traditional Service Account Model | Role-Based Tool Isolation |

|---|---|---|

| Permission Scope | Broad, static permissions set at deployment | Granular, tool-specific permissions granted just-in-time |

| Access Review | Manual, periodic (often missed) | Continuous validation at every tool invocation |

| Audit Trail | High-level system logs | Tool-level traceability with full context |

| Risk Profile | A single compromise exposes the entire permission set | Isolated to a specific tool and temporal scope |

| Compliance | Difficult to demonstrate least privilege | Built-in 21 CFR Part 11 and GxP alignment |

The Regulatory Imperative Driving Adoption

Security arguments alone would suffice, but regulations are pushing even harder. FDA’s 21 CFR Part 11 demands rigorous access controls, audit trails, and data integrity for electronic records in drug development. EU Annex 11 sets parallel standards for GMP computerized systems.

When autonomous agents create clinical documents, update batch records, or alter trial protocols, they generate regulated electronic records. Systems must prove who authorized each action, what data was touched, why permission existed, and preserve an immutable decision chain.

Traditional shared service accounts break this linkage. You lose traceability back to the authorizing human. Role-based tool isolation preserves accountability: logs reveal not merely that a tool ran, but which user’s context approved it, for what reason, and within which bounds. This aligns closely with the joint FDA-EMA Guiding Principles of Good AI Practice in Drug Development released in early 2026.

Implementation Patterns Gaining Traction in 2026

Pharma AI teams are adopting several practical patterns to implement role-based tool isolation.

The Tools Gateway Pattern

All agent tool calls route through a centralized gateway that enforces checks, validates inputs, isolates execution, and logs comprehensively. Microsoft’s Agent 365 platform, generally available since May 2026, exemplifies this with built-in runtime threat protection and investigative tools.

Policy-as-Code Enforcement

Teams codify policies declaratively, mapping human roles to allowed tools and data scopes. Policy engines evaluate full context (user role, data sensitivity, timing, agent behavior) for adaptive, nuanced decisions beyond binary allow/deny.

Sandbox Execution Environments

High-risk actions (code runs, database writes, external calls) execute in contained environments with resource caps and network limits. Anomalies trigger throttling, blocking, or alerts to security teams.

Real-World Impact: Operational Changes

Adopting role-based tool isolation delivers concrete benefits:

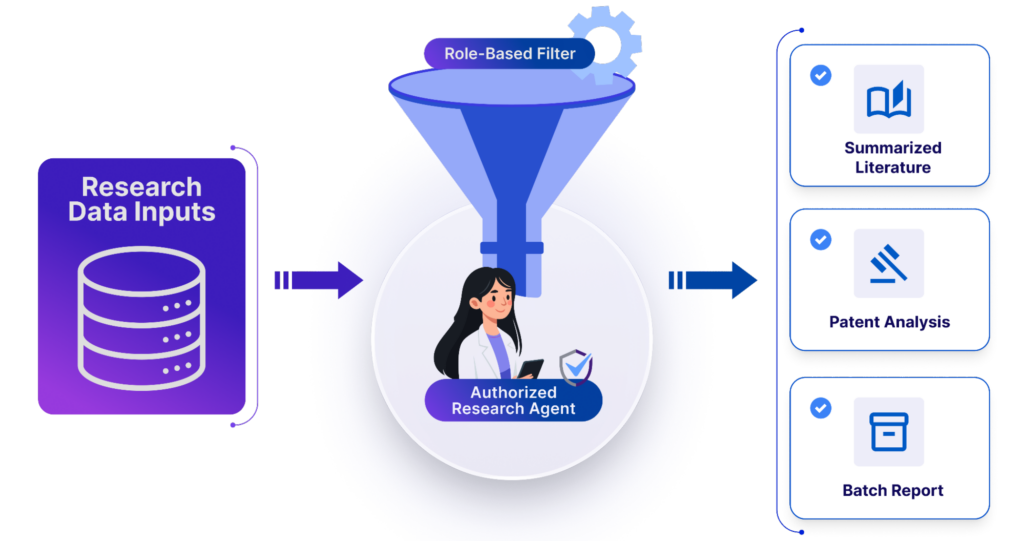

- Research scientists deploy literature review or patent-analysis assistants without broad credential provisioning. The agent automatically inherits read-only permissions.

- Manufacturing agents query batch records within facility scope but are blocked from unrelated formulation data.

- Clinical agents read patient outcomes yet require explicit human sign-off for writes to trial systems.

- As projects evolve (scope expands, trial phases advance), permissions adjust dynamically via user role. No manual service account rework needed.

Role-based filtering for secure agent workflows

Role-based filtering for secure agent workflows

Early adopters report 25% faster audit prep, 90% quicker remediation of misconfigurations, and, most importantly, true continuous compliance instead of periodic spot-checks.

The Challenges

Progress moves quickly, yet real hurdles persist. Tooling matures unevenly. Benchmarks show inconsistent tool-abuse detection across products. Many teams still struggle to catalog every agent, let alone apply uniform policies.

Performance matters too. Invocation-by-invocation validation introduces latency. Microseconds multiply across hundreds of calls. Teams balance this through caching low-risk decisions and optimized paths.

The deepest challenge remains cultural. Security professionals must shift from static perimeters to dynamic, context-driven models. Developers need to build isolation-first rather than retrofit. Business users must accept that greater autonomy arrives with unavoidable guardrails they cannot override.

Looking Forward: Establishing the New Baseline

By Q4 2026, role-based tool isolation will likely move from differentiator to non-negotiable expectation. No serious pharma company would run trials or manufacturing systems without audit trails or controls. AI agents will soon face the same default.

Regulatory momentum will accelerate this. With the FDA and EMA signaling more specific AI guidance in the coming 18 months (building on their January 2026 joint principles), robust access demonstration will stand central. Early implementers gain clear compliance and competitive edges.

Ultimately, pharma leaders in 2027 and beyond won’t win by deploying the most agents or the most autonomous ones. They’ll win by scaling AI while preserving the trust, transparency, and accountability our industry requires. Role-based tool isolation isn’t merely a security tactic. It’s the bedrock enabling trustworthy, production-grade agentic AI.

The pilot era has ended. The production era insists we build this right. And in 2026, the industry is stepping up to meet exactly that challenge.

The new baseline for controlled AI deployment

The new baseline for controlled AI deployment

Key Takeaways

- Agentic AI in pharma has advanced from pilots to production, reshaping security risks dramatically.

- Traditional service accounts create persistent vulnerabilities when applied to autonomous agents.

- Role-based tool isolation delivers granular, invocation-level permissions with least privilege and just-in-time access.

- Regulations like 21 CFR Part 11 and EU Annex 11 demand audit trails linking actions to human authorization.

- By late 2026, structured agent permission frameworks will become the baseline for pharma AI.

Author’s Note: This article was supported by AI-based research and writing, with Claude 4.5 assisting in the creation of text and images.

FAQs

What exactly is role-based tool isolation?

A security model where AI agents receive limited, task-specific permissions only when they call a tool, ensuring access is controlled based on the task, context, and authorized user.

Why can't traditional RBAC handle AI agents?

Traditional RBAC works for predictable human behavior but fails with agents’ rapid, non-deterministic tool invocations (hundreds per second). Broad service accounts create unmonitored vulnerabilities, especially in non-deterministic systems that adapt in unexpected ways.

How does role-based isolation help with regulatory compliance like 21 CFR Part 11?

It maintains a clear, immutable chain of accountability by logging not just what the agent did, but which human context authorized it, for what purpose, and under which constraints, directly supporting audit trails and data integrity requirements.

What performance trade-offs come with role-based tool isolation?

Validation at every tool call adds latency (microseconds per call), which compounds in long workflows. Teams can mitigate this with policy caching for low-risk operations and optimized paths, balancing security with speed.

Is role-based tool isolation already in use in 2026?

Yes, patterns like tools gateways (e.g., Microsoft Agent 365), policy-as-code, and sandboxing are gaining traction among pharma teams, with early adopters seeing faster audits and better compliance.