The rise of generative AI (GenAI) is transforming how life sciences organizations approach evidence synthesis, regulatory submissions, and market access activities. As literature review workflows become more complex—and timelines more compressed—life science professionals are increasingly turning to GenAI-enabled platforms to streamline systematic literature reviews (SLRs), reduce manual burden, and scale evidence synthesis.

However, evaluating these platforms requires more than just comparing features or automation claims. When regulatory compliance, scientific rigor, and traceability are on the line, life science professionals must consider deeper questions of transparency, accuracy, and compliance.

This guide outlines the five critical areas life science professionals should use to assess GenAI-based literature review tools.

1. Transparency: Is the AI’s Decision-Making Visible and Explainable?

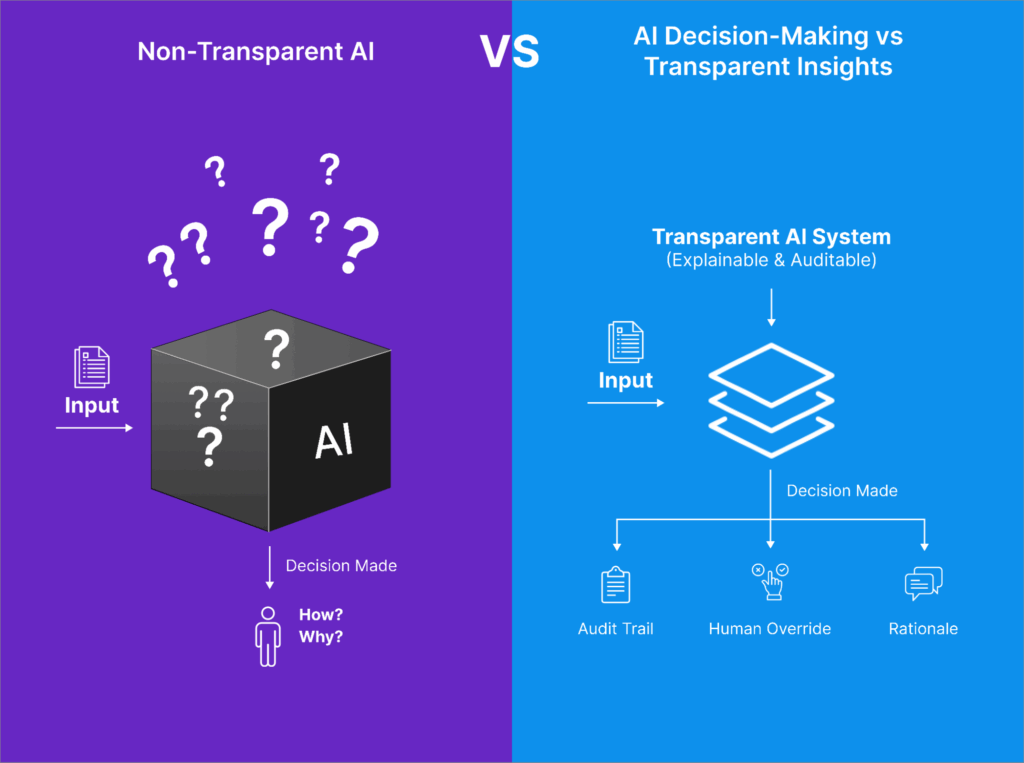

One of the most important (and often overlooked) factors in evaluating an AI platform is whether the system can explain why it made a particular decision, such as including or excluding a study. In traditional SLRs, human reviewers must document and justify inclusion or exclusion decisions to maintain scientific and regulatory credibility. The same standard should apply to AI-assisted workflows. A transparent AI platform should not only display its decisions but also provide explanations grounded in the protocol criteria (e.g., PICOS). For example, if an AI recommends excluding a study, it should clearly indicate whether that exclusion was based on population mismatch or irrelevant outcomes, ideally using extractable snippets or summaries from the source material itself. Furthermore, transparency includes the ability for blinded human reviewers to interact with, confirm, or override AI suggestions, maintaining scientific control while benefiting from AI support.

Importantly, the platform should maintain a comprehensive audit trail that captures the rationale behind each action—whether taken by the AI or a reviewer—along with timestamps and user attribution. This is not only best practice for reproducibility and internal quality assurance, but also essential in regulatory settings where agencies like the EMA, NICE, or FDA may request clear evidence justifying how study selection decisions were made. Without transparency, AI tools function as black boxes, potentially undermining the very rigor that systematic reviews are designed to uphold.

Key Questions to Ask:

- Are AI outputs accompanied by rationale or justification?

- Can human reviewers view, validate, or override AI recommendations?

- Is there a transparent record of how inclusion/exclusion decisions were made?

Why It Matters:

Regulatory agencies and payers increasingly require clear audit trails. Black-box AI that provides no insight into how conclusions were reached may put submissions at risk or weaken the credibility of your evidence package.

2. Accuracy: Does the Platform Deliver Reliable, Validated Results?

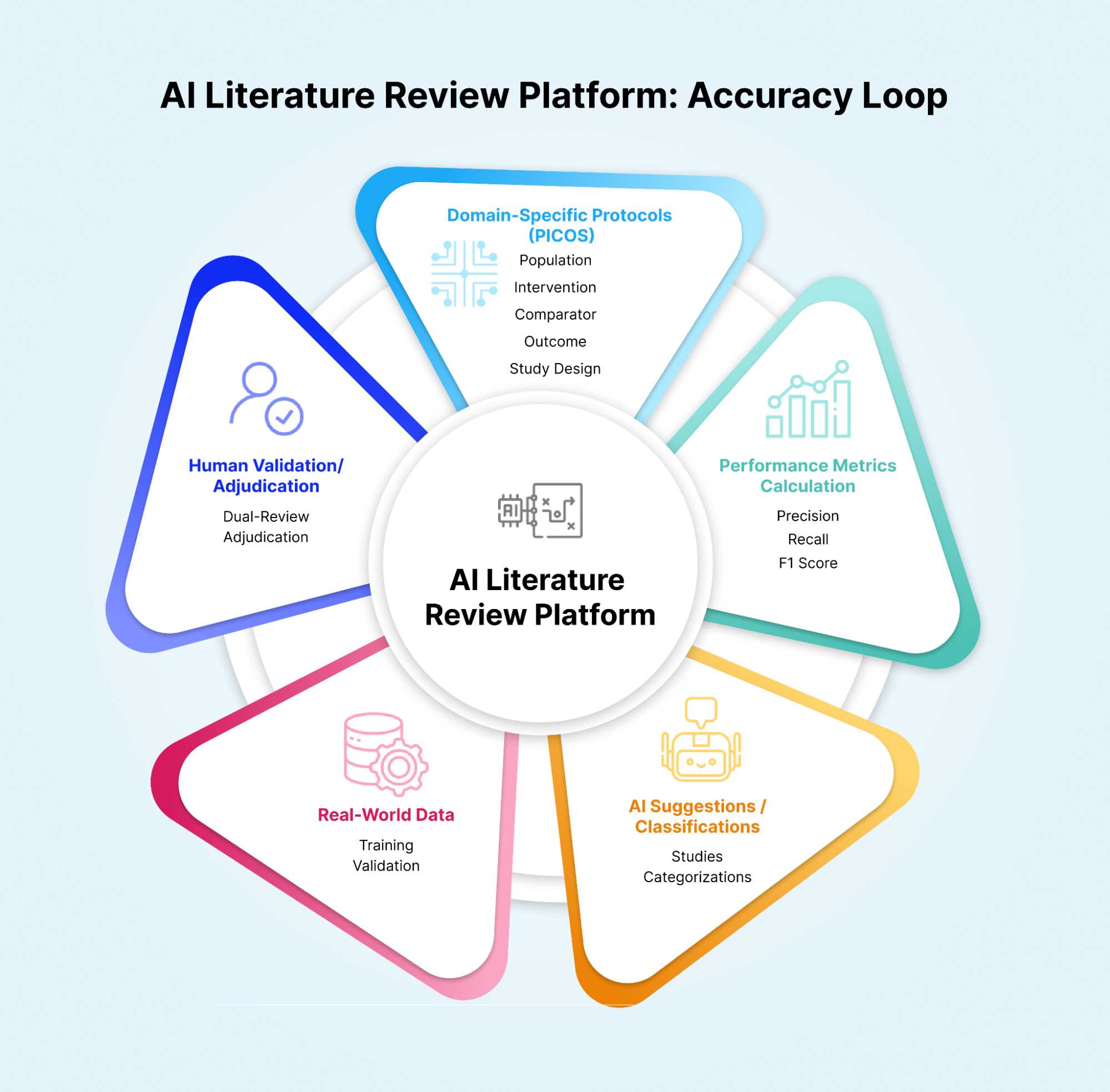

Many vendors claim, “high accuracy,” but the definition of accuracy—and how it’s measured—varies widely. Life science professionals should look beyond marketing and ask for validation data, ideally from real-world use cases that resemble their own. Accuracy directly impacts the integrity of downstream outputs such as HTA (Health Technology Assessment) submissions, GVDs (Global Value Dossiers), and clinical summaries. But accuracy in this context should be more than a vague claim. It must be measured through rigorous metrics like precision, recall, and F1 scores on domain-specific tasks (e.g., title and abstract screening or full-text classification). A high-performing model in general NLP tasks doesn’t guarantee accuracy in biomedical contexts, where nuance, terminology, and relevance scoring require domain alignment. Furthermore, platforms should enable dual-review or adjudication workflows, so that AI outputs can be validated by human reviewers, especially in high-risk or novel therapeutic areas. Some platforms may offer confidence scores or rank results by AI certainty, which can help teams triage faster without blindly trusting automation.

Ultimately, life science professionals should request real-world validation data, not just synthetic benchmarks. Ask for performance metrics on sample reviews, use-case-specific results, or pilot data generated in partnership with other clients. An AI tool that doesn’t offer transparency into its performance—or worse, doesn’t measure it—is unlikely to meet the rigor required in scientific and regulatory settings.

Key Questions to Ask:

- What accuracy metrics are available (e.g., precision, recall, F1 score)?

- Are the models tuned to specific protocols (e.g., PICOS)?

- Does the platform support human validation, adjudication, or dual-review?

Why It Matters:

SLRs feed into high-stakes deliverables like HTA submissions, GVDs, clinical summaries, and more. A system that misclassifies studies or lacks context-specific tuning can compromise the validity of your findings, even if it’s fast.

3. Compliance: Is the Platform Built for Regulated Use?

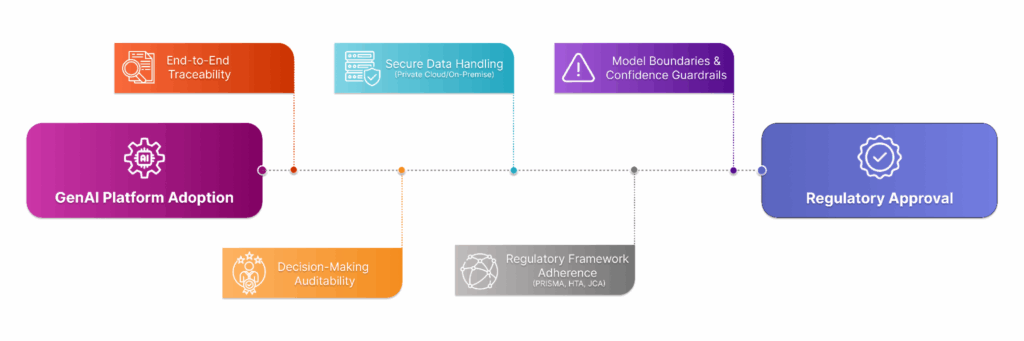

Ethical considerations in AI don’t end with fairness or bias mitigation—they extend to compliance, reproducibility, and scientific defensibility. In regulated environments like pharma, biotech, and healthcare, life science professionals must evaluate whether a GenAI platform aligns with evolving global frameworks such as PRISMA, EU HTA regulations, and Joint Clinical Assessments (JCA). At a minimum, this requires that the platform offers traceability from every output to its source, whether it’s a PDF in a shared drive or a PubMed abstract. The platform must also preserve decision-making logs and reviewer attribution, so that each inclusion/exclusion, tag, or extraction decision can be tied to a user or AI model, providing a clear audit trail for internal QA or external review. Data handling is another key consideration. Life science professionals should look for platforms that support enterprise-grade privacy and deployment models, including private cloud or on-premises hosting, especially when dealing with proprietary search strategies or confidential sources. Lastly, ethical AI also means understanding model boundaries: a platform should provide warnings or guardrails when confidence is low or input quality is questionable. Platforms that overstate AI capability or obscure what’s under the hood can lead to compliance issues, reputational risk, and potentially flawed scientific output.

Key Questions to Ask:

- Is there traceability from AI outputs back to the original sources?

- Can the system be deployed in a secure (e.g., on-premises or private cloud) environment?

- Does the platform support regulatory-aligned workflows and documentation?

Why It Matters:

SLR data often flows into regulatory submissions or payer negotiations. Without proper safeguards and traceability, the use of GenAI can raise red flags or introduce compliance risks.

4. Flexibility: Can the Platform Support Diverse Workflows and Teams?

No two reviews are the same. A platform should accommodate different types of reviews (e.g., title and abstract screening, full-text screening, data extraction) and adjust to the structure of your team, whether you’re running studies in-house or via external vendors. Life science professionals should look for platforms that support multiple review tasks—such as title and abstract screening, full-text screening, data extraction, and summarization—with options to customize how those tasks are executed. For example, some platforms may offer AI-aided review (where humans remain primary reviewers) or AI-as-reviewer models (where AI acts as a secondary reviewer)—each with distinct implications for speed, oversight, and quality. It’s also essential that the platform allows tailored tagging systems, such as custom inclusion/exclusion criteria or PICOS-aligned labels, to reflect the specific needs of each review. Additionally, review teams may vary in their technical expertise and internal resourcing.

A good platform should be equally usable by scientific teams, regulatory reviewers, and medical writers, while also offering integration points (e.g., API access, citation management, or data export options) for power users or IT teams. Platforms that are overly prescriptive in design or assume one-size-fits-all workflows may become bottlenecks as project scope, volume, or complexity increases.

Key Questions to Ask:

- Does the platform support both AI-aided and fully automated modes?

- Can the tool handle different therapeutic areas or study types?

- Is there support for customizing prompts, tags, and data fields?

Why It Matters:

Rigid workflows limit scalability. A platform that adapts to your processes, not the other way around, will yield better results—and fewer headaches during implementation.

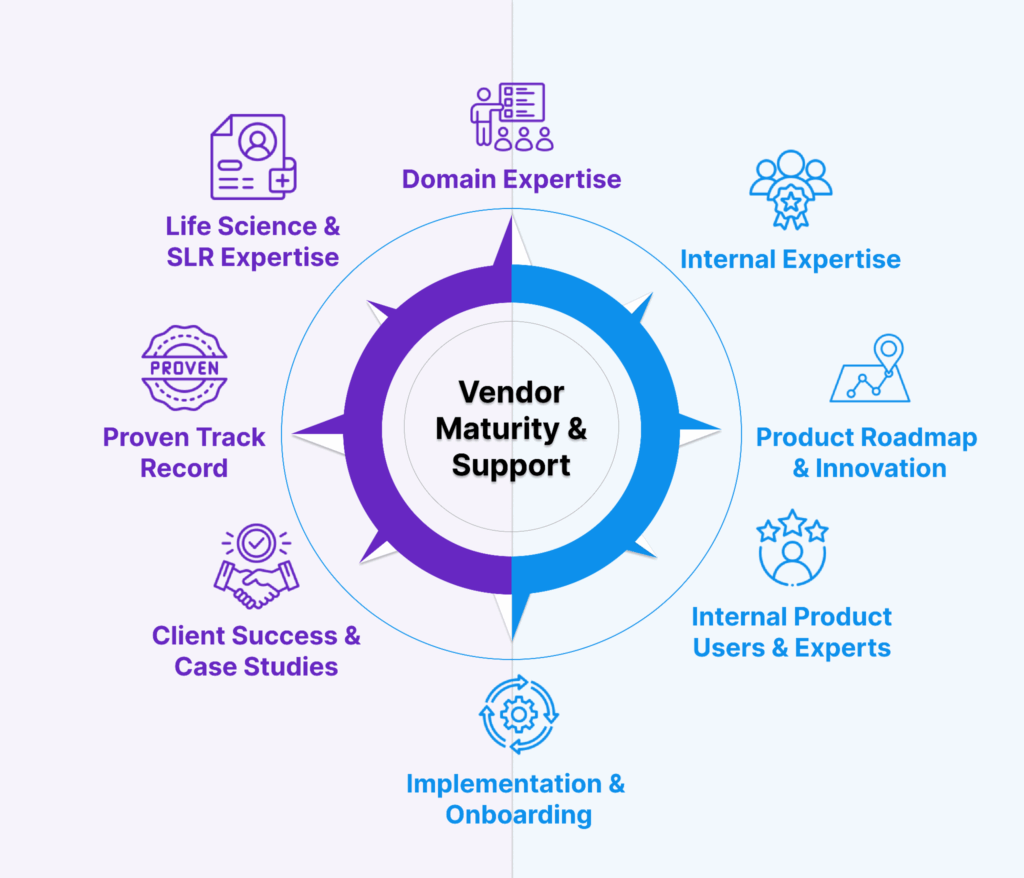

5. Vendor Maturity & Support: Is There Substance Behind the Software?

Beyond technical features, life science professionals should evaluate the maturity and credibility of the vendor. Look for teams with domain expertise, real clients, and a commitment to continuous improvement. Ideally, they should have an internal team using the software to conduct literature reviews and a team of super-users who can provide support and best practices around optimizing the AI aspects of the platform to new users when needed.

In a rapidly evolving space like GenAI, vendor maturity can make the difference between a successful deployment and a costly misfire. A platform may offer promising features, but if the vendor lacks the operational maturity, scientific expertise, or implementation support to back them up, real-world impact may fall short. Life science professionals should begin by assessing the vendor’s track record in life sciences: Do they have experience with HEOR (Health Economics and Outcomes Research), medical affairs, or regulatory teams? Can they demonstrate success in supporting SLR workflows across multiple therapeutic areas or submission types? Do they use the software themselves to provide literature review services for full-service (non-SaaS) clients? Mature vendors will provide case studies, client testimonials, and pilots tailored to your use case, not generic demos or white papers. Just as important is the level of implementation and onboarding support. Does the vendor offer dedicated teams, documentation, or training to get your teams up and running quickly? Are there services available if you need to outsource or augment internal capacity?

Finally, life science professionals should inquire about product roadmap transparency: Are there regular updates? Are user feedback and regulatory changes driving feature development? An AI vendor that invests in domain expertise, client partnership, and continuous improvement is far more likely to scale with your organization’s evolving needs.

Key Questions to Ask:

- Does the vendor offer hands-on support or services to help with onboarding?

- Are there client references or published case studies?

- Is there a clear roadmap for product updates and GenAI improvements?

Why It Matters:

The best technology fails without proper support. Vendors that understand SLR workflows and life sciences nuances are more likely to deliver a successful deployment.

Bonus: Additional Evaluation Tips

- Run a Pilot: Ask for a limited-use trial or test against your own dataset.

- Ask for Audits: Ensure there’s a record of reviewer decisions and AI involvement.

- Cross-Functional Involvement: Include stakeholders from HEOR, regulatory, medical affairs, and IT in your evaluation process.

Final Thoughts

GenAI is reshaping literature review workflows—but not all AI-enabled platforms are created equal. As a life science professional, your responsibility is not only to select a tool that automates tasks, but one that enhances transparency, safeguards scientific integrity, and aligns with compliance expectations.

By focusing on transparency, accuracy, ethics, flexibility, and vendor maturity, you’ll be better equipped to select a platform that delivers meaningful, scalable, and trustworthy results in your evidence generation efforts.